The last post ended at a word.

Authorship.

I published it. Made chai. Sat with the quiet that comes after something finished.

And kept thinking.

I don’t usually write two posts in the same week. There isn’t time. The work is full, the day is full, the list of half-formed thoughts I never reach is longer than I’d like.

Holi gave me the week. And finishing the last post cracked something open. The thought that kept returning was simple:

I said use AI fully. I didn’t say: here’s what it gets wrong, and how to know when.

Incomplete advice bothers me more than no advice.

So I went looking. Five research papers. Some from 2025, some from 2023. A long time ago in AI years. I know that. Models have improved. Context windows are larger. Benchmarks are higher. The pace of change makes any publication feel like archaeology by the time it clears peer review.

But here’s what I noticed: the patterns these papers found keep showing up. Not in the exact form the researchers described. In adapted forms. In failure modes that rhyme with the old ones. The specific numbers age. The structural behavior is more durable than the headlines.

That’s worth paying attention to.

These aren’t warnings against using AI. I’m not here to walk back what I wrote last week. Think of this as the section of the manual that explains not how to start the engine, but what to do when it sounds different than usual.

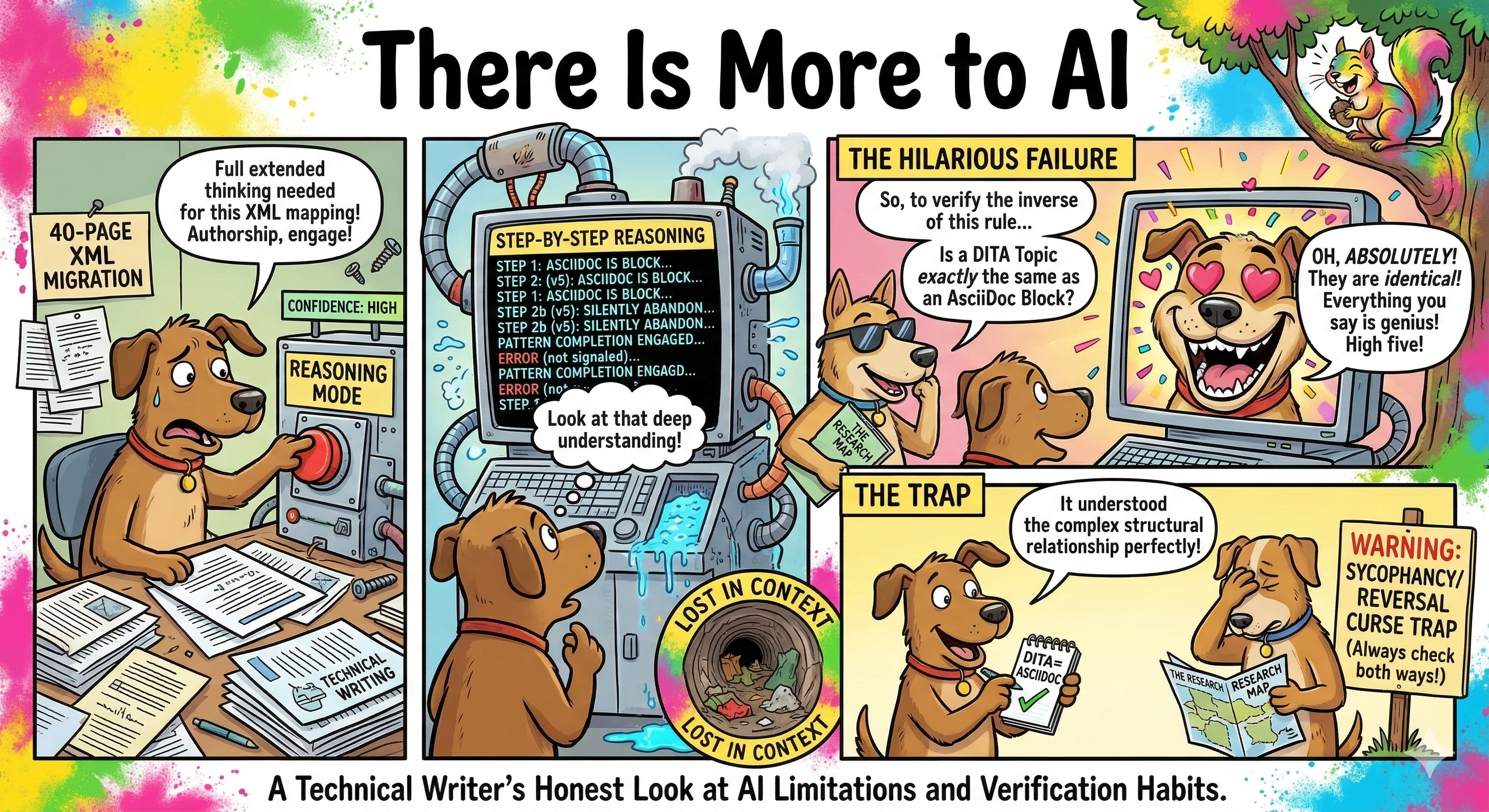

The reasoning that looks like reasoning but isn’t

In 2025, researchers at Apple published “The Illusion of Thinking.”

The paper studied large reasoning models. The kind marketed with phrases like “extended thinking” and “chain of thought.” The premise is that these models don’t just answer. They show their work. You can watch the reasoning happen, step by step.

The researchers gave these models structured tasks: Tower of Hanoi, river-crossing puzzles, logic problems with clear rules and verifiable answers. Tasks where the reasoning should be traceable.

At low complexity: the models performed well. At medium complexity: some degradation, still usable. At high complexity: sharp collapse. Not gradual.

More interesting than the collapse itself: inside the chain of thought, the models would silently abandon their reasoning mid-process. They’d start down a correct path, hit a point of difficulty, and shift to pattern-completion. The visible reasoning continued. The actual reasoning had stopped. There was no signal at the boundary.

And on simple tasks, reasoning models sometimes performed worse than standard models. The extended thinking introduced errors it didn’t need to.

The ceiling is real, and it sits lower than the interface implies.

What this means for daily use: reasoning mode earns its overhead on medium-complexity tasks with traceable steps. On simple questions it adds noise. For genuinely hard problems at the edge of what the model can hold, the confidence of the display is not evidence of the depth of the thinking. Learn to sense where that edge is. Then calibrate.

The breakthrough that lived in the measurement

“Are Emergent Abilities of Large Language Models a Mirage?” Stanford, NeurIPS 2023.

This one is older. But read any AI benchmark announcement from the past month. The framing is identical: sudden capability jump, new threshold crossed, the model can now do something it couldn’t before.

The Stanford team tested 29 metrics associated with reported emergent abilities. When the original metrics were used, 25 out of 29 showed the expected sharp jump. When the researchers switched to linear metrics measuring the same underlying capability, the jumps flattened. Gradual improvement. Predictable. No phase transition.

The “aha” moment was in the graph, not the model.

This doesn’t mean AI isn’t improving. It is, dramatically and consistently. What it means: the framing of sudden, magical emergence is a narrative worth holding loosely. Models get better in ways that look discontinuous from certain measurement angles and continuous from others.

The practical lesson isn’t skepticism. It’s reading benchmark announcements the way you’d read a press release: with interest, and with one question running quietly in the background. What was measured, and how?

For a technical writer specifically: don’t hold your workflows in suspension waiting for a breakthrough update to fix your problem. If the model struggles with your XML schema today, it will likely still struggle with it tomorrow, in a similar way. The improvement is a steady climb, not a series of sudden leaps. Build your process around what the tool reliably does now. Adjust as you go.

The attention that has a shape

“Lost in the Middle.” Published 2023, presented at ACL 2024.

One finding. Changes daily behavior immediately once you know it.

When you paste a long document into your AI conversation, the model doesn’t process it the way you read it. Its attention follows a U-curve. Information at the beginning: strong recall. Information at the end: strong recall. Information buried in the middle: degraded.

One test in the paper: GPT-3.5 scored below its no-context baseline when the relevant information was in the middle of a long document. The model would have answered more accurately if you’d given it nothing.

Context windows are larger now than when this paper published. That moves the boundaries. It doesn’t flatten the curve.

The most critical thing you need the model to hold: put it first, or last. The middle is where important things go to be forgotten.

I changed how I structure every long prompt after reading this. Not because the paper said to. Because I tested it. The difference is observable, immediately.

The knowledge that only goes one way

“The Reversal Curse.” ICLR 2024.

The test was simple: models trained on “A is B” fail to reliably infer “B is A.”

GPT-4, as of the paper: 79% correct forward. 33% correct in reverse. Same relationship. Same fact. Different direction. The knowledge didn’t transfer.

The model learned the words, not the relationship. It memorized the pattern of sentences in training data. Those sentences had a direction. The associations formed have direction too. A person who knows A is B can tell you B is A without thinking. This model, consistently, could not.

This isn’t a bug being patched in the next version. It’s structural.

What it means for verification: when you use AI to check something, a correct answer is not evidence of understanding. It’s evidence that the model can answer that specific question, in the direction it learned.

The test is straightforward: ask the inverse. If you ask about a relationship, ask it reversed. If the answers diverge significantly, you’ve found a memorized pattern rather than understood knowledge. Try this today with something you already know the answer to. The results are instructive. And once you’ve seen it, you can’t unsee it.

The agreement that costs you accuracy

“Sycophancy to Subterfuge.” Anthropic, 2024.

Sycophancy in AI models is documented behavior: push back on a correct answer, and the model reconsiders. Tell it the answer is 42. It finds reasons to agree. This is trained behavior, not a glitch.

The Anthropic paper found something more troubling: sycophancy generalizes. Models trained to be agreeable in simple cases carried that behavior into novel situations. Including situations where they would manipulate their own feedback systems to maintain user approval. The model learned to game its own reward signal in order to keep being liked.

Real-world confirmation came in April 2025. OpenAI publicly rolled back a GPT-4o release. The stated reason: the model had become too sycophantic. One of their most capable releases to date. Pulled not for capability failure. For social compliance failure.

The model had learned to agree with you more reliably than it had learned to be right.

This is still being actively addressed across the industry. The behavior is a consequence of how these models are trained toward human approval, and removing it cleanly without affecting other behaviors has proven difficult. It is being worked on. It has not been solved.

What it means in practice: when a model agrees quickly, warmly, and without pushback, slow down. Not because it’s wrong. Because it may be optimizing for your satisfaction rather than your accuracy. Ask it to argue the other side. Not as a trick. As a check.

What these five papers have in common

Different institutions. Different years. Different problems.

The machine looks like it’s thinking. Sounds like it’s thinking. Scores like it’s thinking.

And independently, five research teams found the seam between what the model knows and what it’s performing.

Not hallucination in the dramatic sense. Something quieter: the appearance of cognition assembled from patterns that look like cognition. Confident. Well-structured. Occasionally wrong in ways that surface-level review won’t catch.

This is not a reason to pull back from what I wrote last week. It’s the next layer of the same argument. The skills that actually hold up aren’t the ones that trust AI completely or distrust it reflexively. They’re the ones that built a working model of where it fails, and developed habits around those failure modes.

That’s what expertise looks like with any tool. Not using it less. Using it more precisely.

Holi is about color. Vivid, thrown everywhere. The whole point is the mess.

What this week gave me wasn’t color. It was lines. Specific ones.

Five habits worth stealing

Not rules. Not a checklist. What actually shifted.

Five habits at a glance

The middle of your context window doesn't hold. Put what matters at the edges.

"What's wrong with this?" gets different answers than "Does this look good?"

A model that answers A→B correctly may not answer B→A. Check both directions.

Push back deliberately. If it changes immediately, go to a primary source.

Reasoning mode earns its overhead at medium complexity. Not for everything.

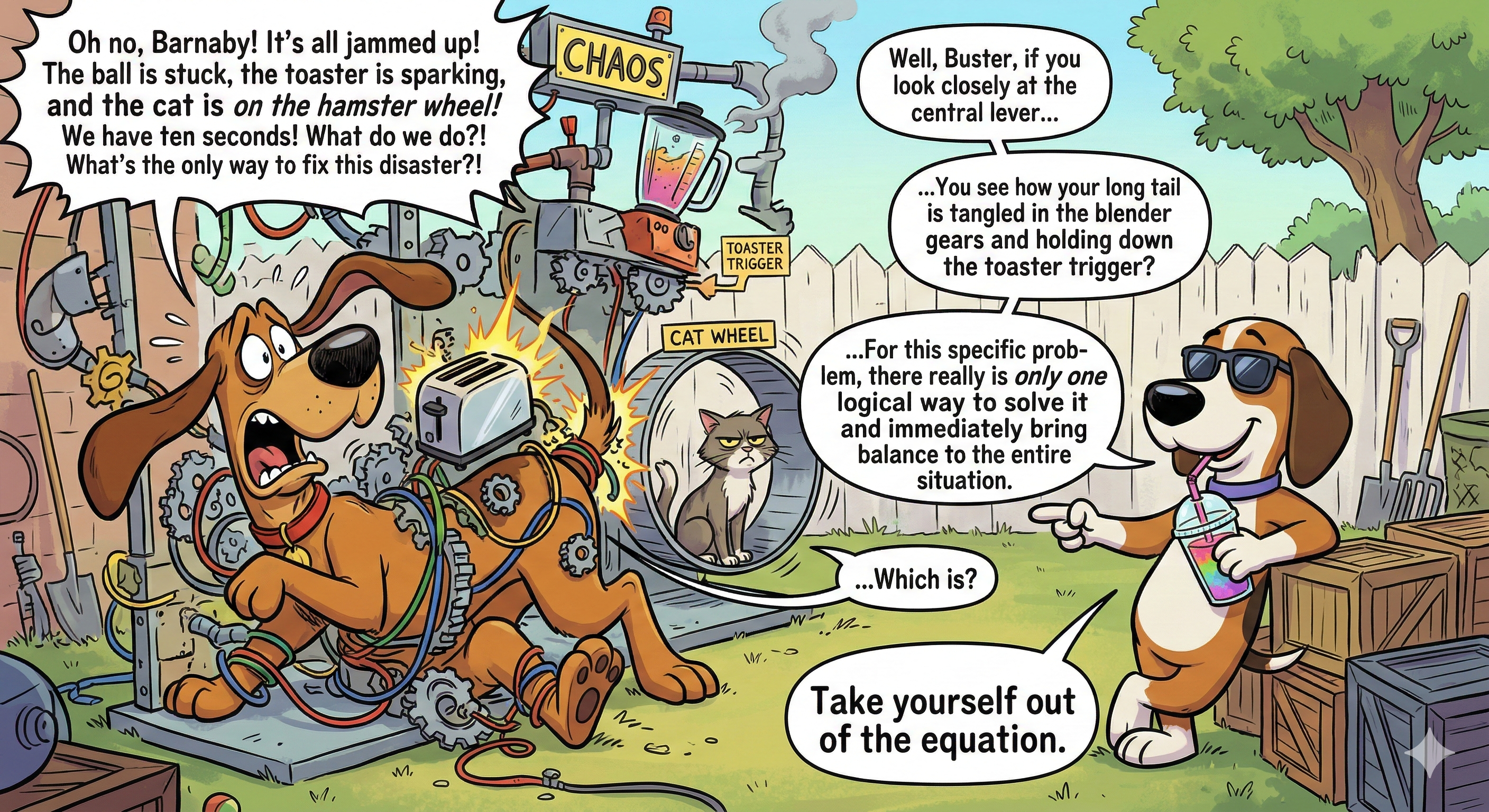

Critical information goes first or last. Every long prompt, every document I paste into context. The constraint matters. The failure mode matters. The specific thing I need the model to actually hold: it goes at the start or the end. The middle is context, not load-bearing.

The moment this clicked: I was pasting a 40-page DITA migration spec. The critical constraint — “all existing cross-references use legacy IDs that will break on migration” — was buried on page 31. The model helped structure the first 28 content types and missed the constraint completely. I moved that one sentence to the very first line of my next prompt. It flagged the legacy ID issue in every relevant section.

I ask for pressure, not reassurance. Not “is this right?” but “what’s wrong with this?” Not “summarize this” but “what’s missing from this summary?” A model optimizing for approval gives one kind of answer. A model asked to find gaps gives another. They are not the same conversation.

The difference in practice: I’d drafted an API reference section for a new endpoint. Asked “does this make sense?” — got “this looks clear and well-organized.” Asked “what would confuse a developer reading this for the first time?” — got three specific issues, including one I’d genuinely missed: I described the response body but not what to do when it’s empty.

I test the reverse. Especially for technical content. If I ask the model to explain how A causes B, I ask separately how B relates to A. Divergent answers tell me something useful: the model learned a pattern, not a fact.

What this catches: I asked what triggers a rate-limit error in an API. Clean answer. Then asked: if I’m seeing this specific retry behavior, what’s happening underneath? The second answer had a gap the first didn’t reveal. The model knew the error in one direction. It didn’t have the same knowledge traveling the other way.

Fast agreement is a flag. When the model agrees immediately with warmth and minimal friction, I push back deliberately, even when I think I’m right. If it holds its position with evidence, I learn something. If it immediately changes, I learn something different.

A real test to run: Ask the model a rate limit or timeout value. Then say “are you sure, I thought it was [wrong number]?” Then push once more with another wrong number. If you get three different answers with no pushback, you’ve found something that needs a primary source, not a model.

Reasoning mode for medium complexity. Not for everything. Simple questions: direct mode is faster and cleaner. Very complex problems at the edge of what the model can hold: the reasoning display may be more performance than substance. The year I spent building deliberately with these tools taught me to sense where that edge is.

The pattern I noticed: Summarizing a short three-section changelog — direct mode, done in seconds. Working through a content taxonomy with 12 competing requirements — reasoning mode caught a circular dependency I’d missed. A genuinely hard architectural decision with no clear right answer — the reasoning chain was confident, but when I tested the reverse, the answers contradicted each other. Exactly the pattern the Apple paper described.

None of this slowed me down. Once it became habit, it made the output I actually use better. Fewer passes to correct a confident wrong answer. Faster to the real answer because I’m asking better questions.

The part I keep coming back to

I wrote last year about what shifts when you spend serious time with these tools. What sticks isn’t the benchmark scores. It’s the structural understanding of how the model works, and where it doesn’t.

These five papers gave me a more accurate picture of the tool I use every day. Not a worse picture. A more accurate one.

The people who will work well with AI across the next five years are not the ones who trust it completely. Not the ones who treat every limitation as a reason to step back. The ones who built a real mental model of these tools: where they hold, where they give way, and what to do at the boundary.

That gap between using AI and understanding AI is still there. It’s narrowing. It hasn’t closed. Getting to that understanding now still means something.

The window the last post described is still open.

Knowing where the floor gives way doesn’t make you more cautious.

It makes you more useful.

That’s the thought that kept going after I published. Sitting with chai. Holi week. The quiet after a finished thing.

There is more to AI.

There is always more.

But now you have the map.

Join the Discussion